The transition from large language models (LLMs) that "predict text" to AI agents that "execute intent" represents a structural shift in corporate resource allocation. While first-wave AI adoption focused on individual productivity—largely through chat interfaces—the current phase involves integrating autonomous agents into core business workflows. This shift forces CEOs to move beyond superficial pilot programs and address the fundamental friction between automated agency and human oversight. Success in this transition is not determined by the sophistication of the underlying model, but by the clarity of the Operational Handover Protocol: the specific conditions under which a machine is permitted to commit company resources, modify data, or represent the brand to a customer.

The Taxonomy of Agentic Autonomy

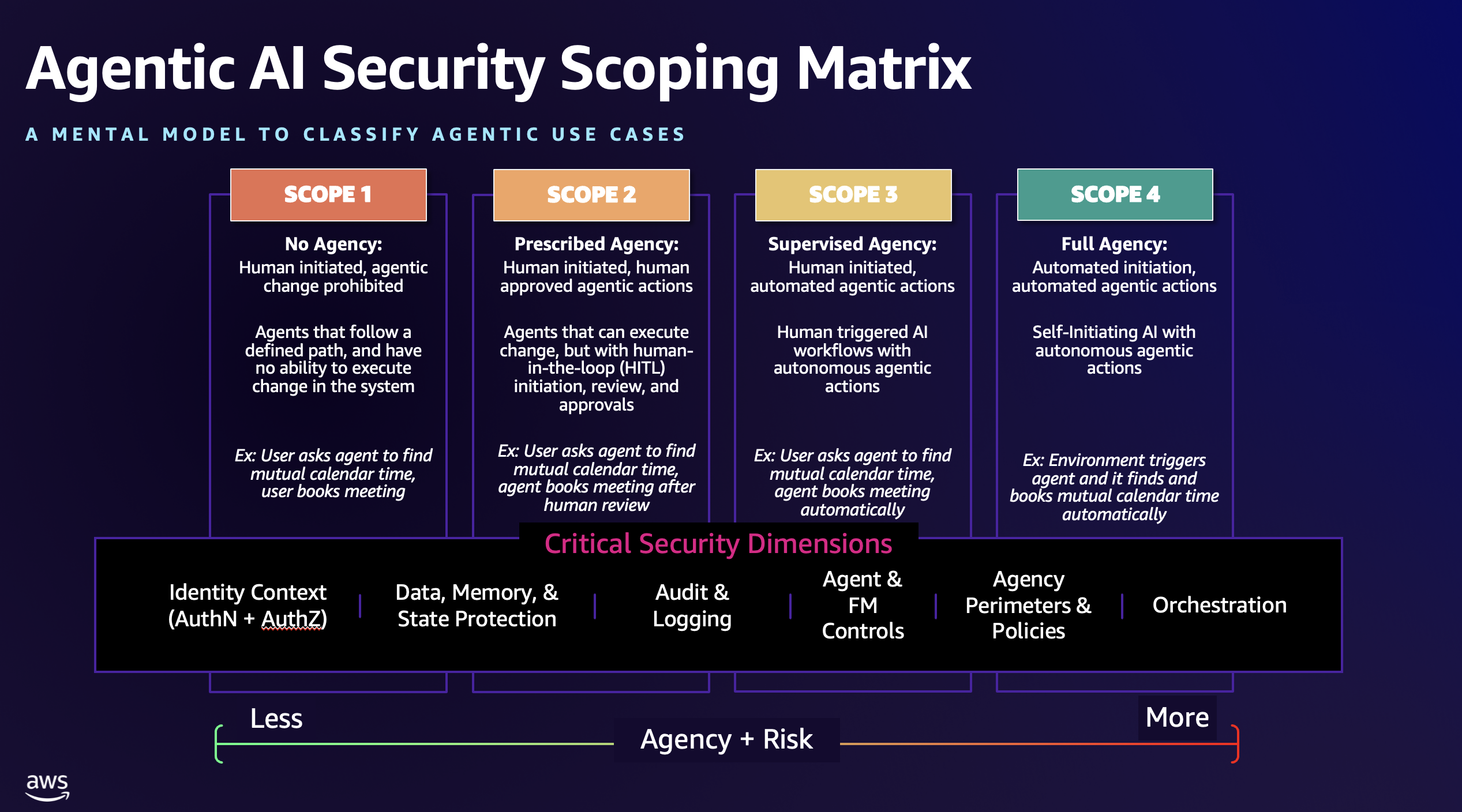

To analyze how organizations are preparing for this shift, one must first define what constitutes an "agent" versus a "tool." A tool requires constant human prompting (Low Autonomy); an agent operates against a high-level objective, decomposing it into sub-tasks and executing them without interim approval (High Autonomy).

- Level 1: Passive Retrieval. Agents that search internal databases to provide synthesized answers. Risk is limited to informational accuracy.

- Level 2: Transactional Support. Agents with "write" access to specific software (CRMs, ERPs). They can update a lead's status or cancel a subscription. Risk involves data integrity.

- Level 3: Negotiated Agency. Agents that can interact with external parties, such as negotiating a procurement contract within pre-defined price bands. Risk involves financial liability and legal commitment.

- Level 4: Full Workflow Ownership. Agents that manage an entire business function (e.g., automated accounts payable) with only exception-based human intervention. Risk is systemic.

The Cost Function of Trust and Latency

Every deployment of an AI agent introduces a trade-off between Action Latency (how fast the business moves) and Error Probability. CEOs are currently engineering systems to manage this cost function.

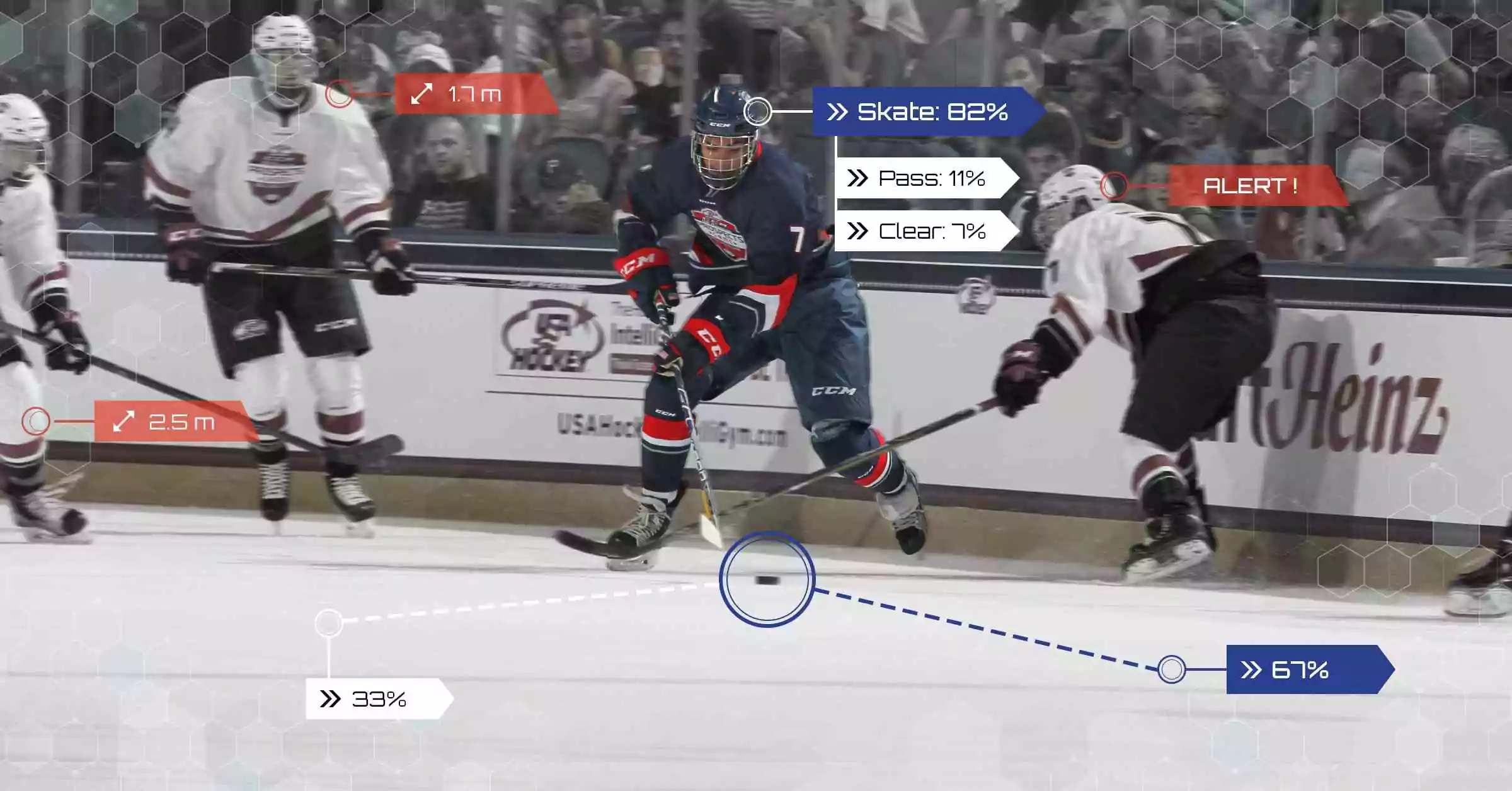

In a manual environment, the cost of an error is often high, but the frequency is low. In an agentic environment, the frequency of "micro-errors" increases. If an agent handles 1,000 customer inquiries simultaneously, a 1% hallucination rate creates 10 simultaneous brand crises. To mitigate this, firms are implementing Verification Loops.

The logic follows a tripartite structure:

- Deterministic Guardrails: Hard-coded rules that the agent cannot override (e.g., "Never offer a discount exceeding 15%").

- Probabilistic Monitoring: A second, smaller LLM that audits the primary agent's outputs for tone, bias, or factual inconsistency before the user sees them.

- Human-in-the-Loop (HITL) Thresholds: Specific triggers, such as a high-value customer sentiment drop or a transaction exceeding a certain dollar amount, that automatically pause the agent and alert a human supervisor.

Structural Realignment of the Workforce

The introduction of agents does not simply "replace" jobs; it reconfigures the Unit of Work. Traditional job descriptions are being decomposed into "Tasks Suited for Agents" (high volume, high repetition, low ambiguity) and "Tasks Reserved for Humans" (high ambiguity, high empathy, strategic pivot points).

This creates a new organizational bottleneck: The Management of Machines. Middle management, historically responsible for overseeing people, is being retrained to oversee "Agent Swarms." This requires a shift from behavioral management to technical auditing. A manager’s value no longer lies in their ability to motivate a team, but in their ability to debug a process flow where an agent has stalled.

The psychological impact on employees is a primary friction point. If an employee perceives the agent as a tool that removes "drudge work" (e.g., data entry), adoption is high. If they perceive it as a competitor for their core competency (e.g., creative writing or strategic analysis), they will engage in Silent Sabotage—refusing to provide the necessary feedback loops that help the agent improve. CEOs are addressing this by tying employee incentives to the performance of the agents they supervise, effectively turning workers into "Agent Product Managers."

The Customer Paradox: Transparency vs. Friction

Customers demand the speed of AI but the accountability of humans. The strategic challenge lies in the "Disclosure Threshold."

Research into customer behavior suggests a U-shaped utility curve regarding AI disclosure. Total transparency ("You are speaking to a bot") can lower customer expectations and increase frustration if the bot is limited. Zero transparency (passing off an agent as a human) creates a catastrophic trust deficit when the bot inevitably reveals its nature through a logic error.

The emerging standard is Proactive Capability Labeling. Rather than just stating "I am an AI," agents are being programmed to state their specific utility: "I am an automated assistant capable of rescheduling your flight or processing a refund; for complex billing disputes, I will connect you to a specialist." This frames the agent as a shortcut rather than a barrier.

Data Governance as the Competitive Moat

The effectiveness of an agent is limited by the quality of its Context Window—the specific internal data it can access to make decisions. Most organizations suffer from "Data Silos," where the customer service agent cannot see the shipping logistics data.

To prepare, CEOs are shifting capital from "Model Tuning" to "Data Engineering." The goal is the creation of a Unified Knowledge Graph. If an agent doesn't know that a customer’s package was delayed three times this month, it cannot apply the correct empathy or compensation logic.

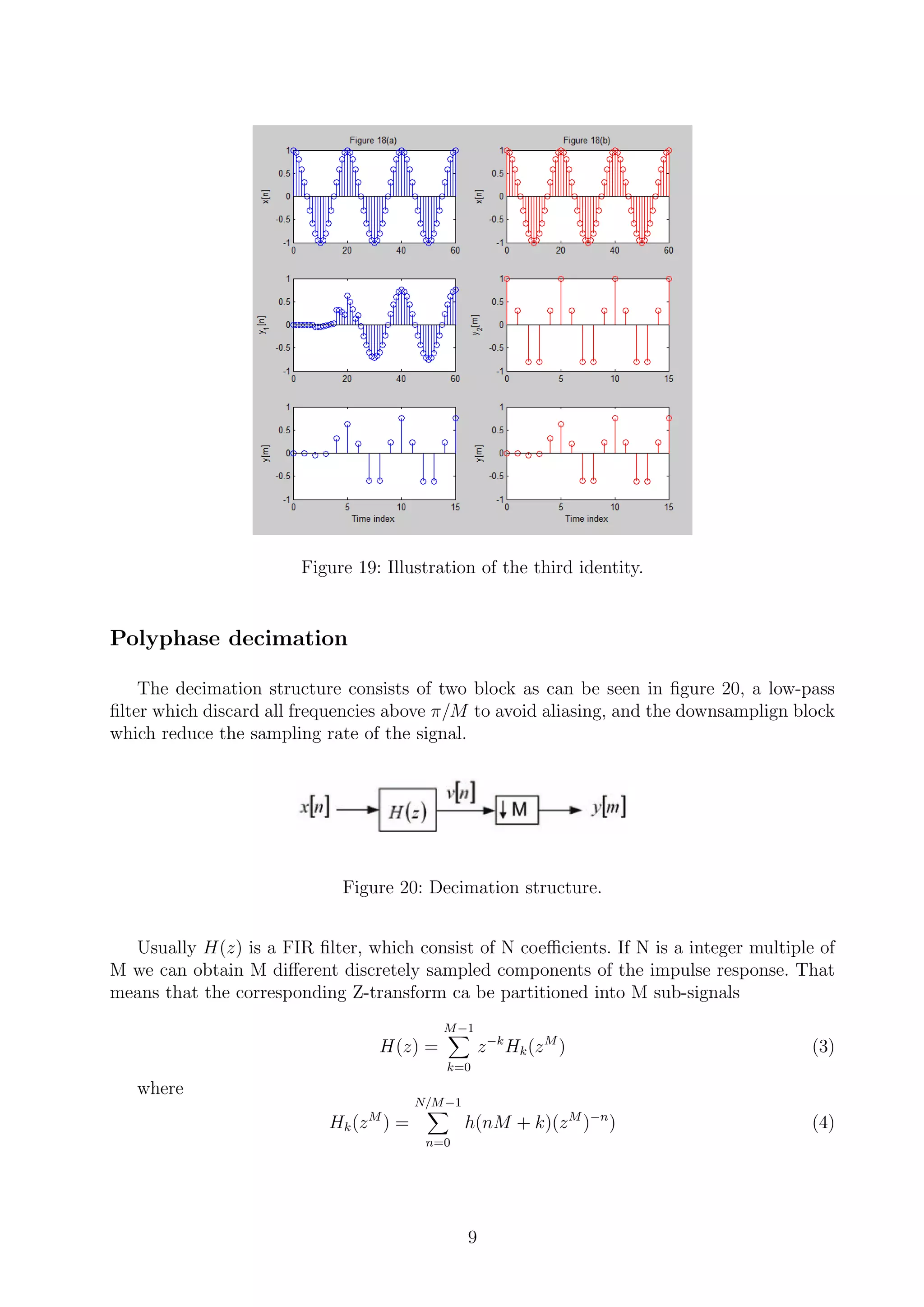

The Limitation of RAG (Retrieval-Augmented Generation):

While RAG is the current industry standard for giving agents data, it is a "pull" mechanism. It waits for a query. The next generation of agents uses "long-term memory" modules that allow them to "remember" past interactions across different platforms. This raises significant privacy and compliance concerns, particularly under GDPR or CCPA frameworks. The strategy here is not just data access, but Data Permissions Management: ensuring an agent doesn't accidentally leak a CEO's private email content to a junior analyst because they share a "General" data pool.

The Liability Shift and the "Black Box" Problem

One of the most significant unaddressed risks in the competitor's narrative is the Legal Attribution of Agentic Error. When an agent makes a mistake that leads to financial loss, who is liable?

Current legal frameworks are ill-equipped for "Distributed Agency." If an AI agent trained by Company A, running on a model by Company B, utilizing a plugin by Company C, makes an error on Company D’s platform, the litigation path is opaque.

CEOs are mitigating this through Algorithmic Insurance and "Snapshot Auditing." Every decision an agent makes is logged in a tamper-proof ledger (often blockchain-adjacent or encrypted logs) so that the chain of causality can be reconstructed during a post-mortem. This moves the organization from "Trusting the AI" to "Auditing the Outcome."

The Strategic Play

For an organization to successfully deploy agents at scale, the leadership must execute a three-phase transition:

- Phase I: The Shadow Period. Deploy agents in "Shadow Mode" where they generate responses or perform actions that are visible only to employees. Use this to calculate the Agent Precision Score against human benchmarks.

- Phase II: The Sandbox Expansion. Move agents to low-stakes external environments (e.g., "Contact Us" forms or FAQ automation) with strict HITL (Human-in-the-Loop) triggers.

- Phase III: The Orchestration Layer. Transition to a multi-agent system where different specialized agents (Sales Agent, Technical Support Agent, Logistics Agent) communicate with each other to solve complex, cross-departmental user requests.

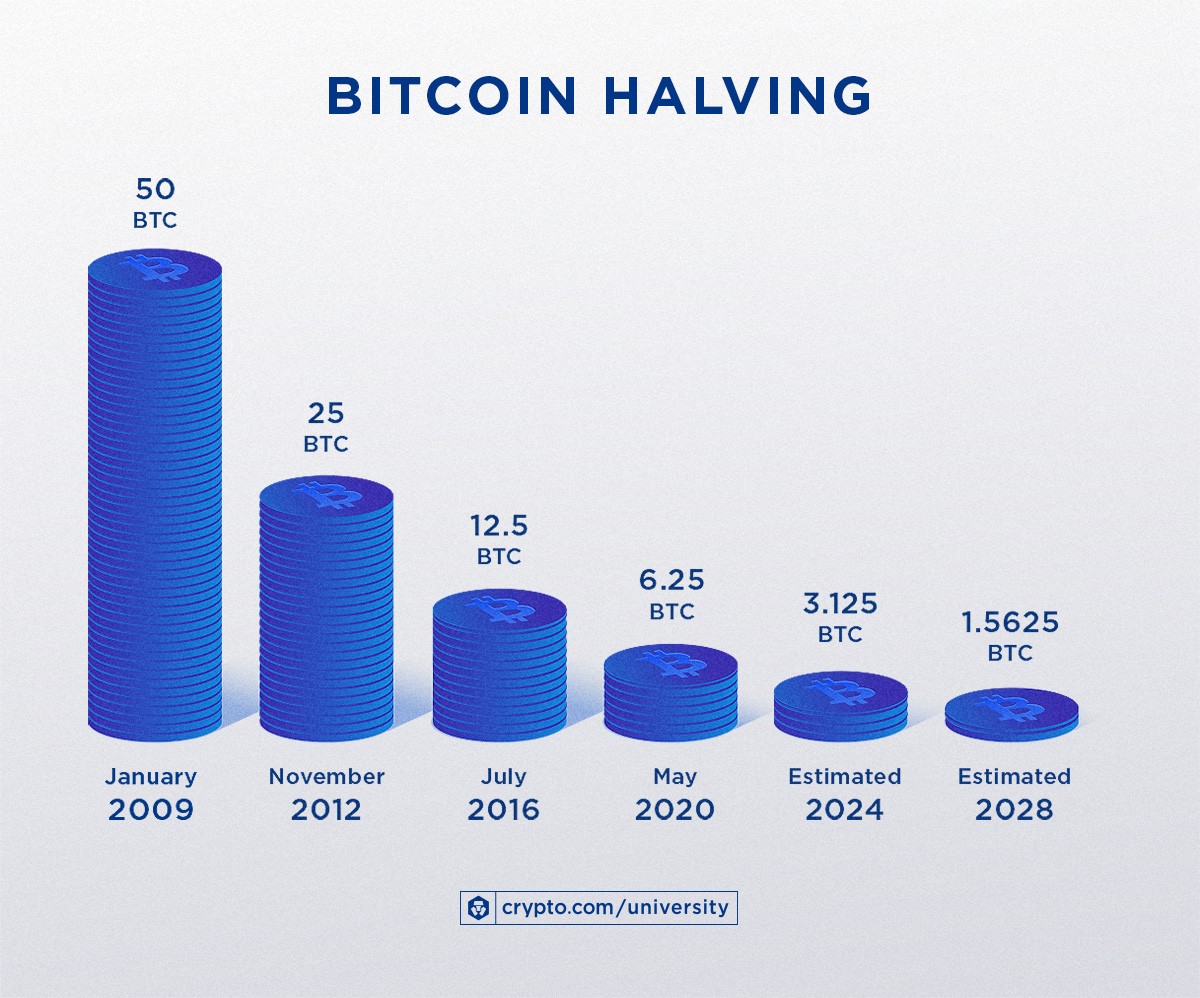

The final strategic move is the abandonment of "AI ROI" as a standalone metric. Because agents change the very structure of the business, their value cannot be captured in a simple cost-saving spreadsheet. Instead, measure the Velocity of Resolution—the time from a customer problem arising to its total, autonomous resolution. Companies that optimize for this metric will decouple their growth from their headcount, achieving a level of scalability that was previously mathematically impossible.